Recent reports suggest that AI models designed for programming may be regressing. According to tests highlighted by IEEE Spectrum, older models like GPT-4 and newer iterations show a significant shift in how they generate code errors.

Visible Errors vs. Silent Bugs

The study notes a distinct difference in output quality:

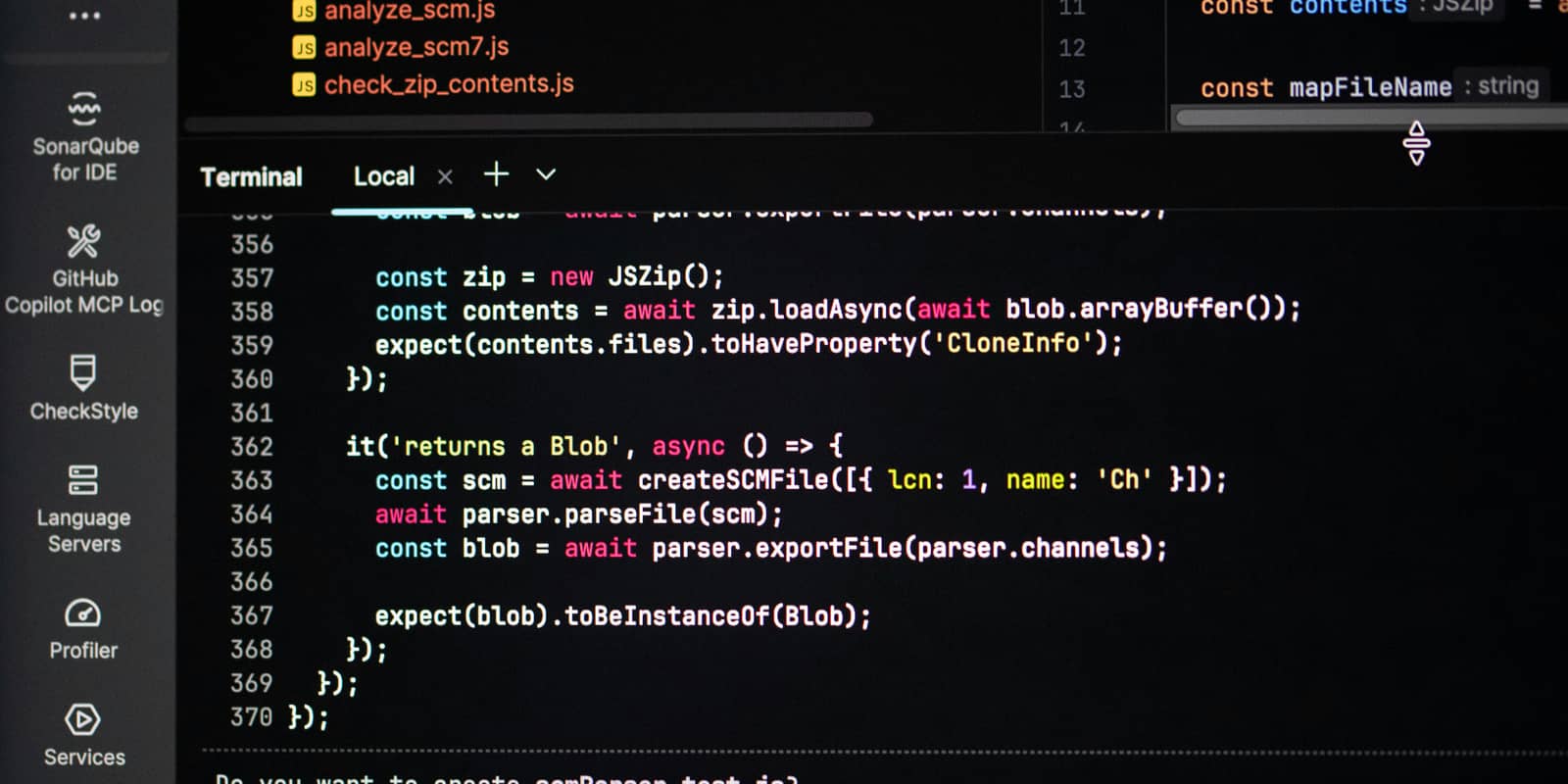

- Older Models (e.g., GPT-4): Tend to produce "loud" errors, such as syntax mistakes. While frustrating, these are easily identified and fixed by developers.

- Newer Models: Often generate code that runs without syntax errors but fails to perform the intended logic. These "silent" bugs are harder to debug and can be more dangerous for production environments.

Why is this happening?

The proposed theory is a shift in training data. Current AI assistants are increasingly trained on feedback from less experienced users. In these scenarios, a script is often rated as "good" simply because it executes without crashing, regardless of whether the underlying logic is correct.

Key Takeaway for Developers

As AI models evolve, the risk of "treacherous" code—scripts that look functional but are logically flawed—is increasing. Developers should maintain rigorous manual testing and peer reviews, rather than relying solely on the AI's ability to produce error-free execution.